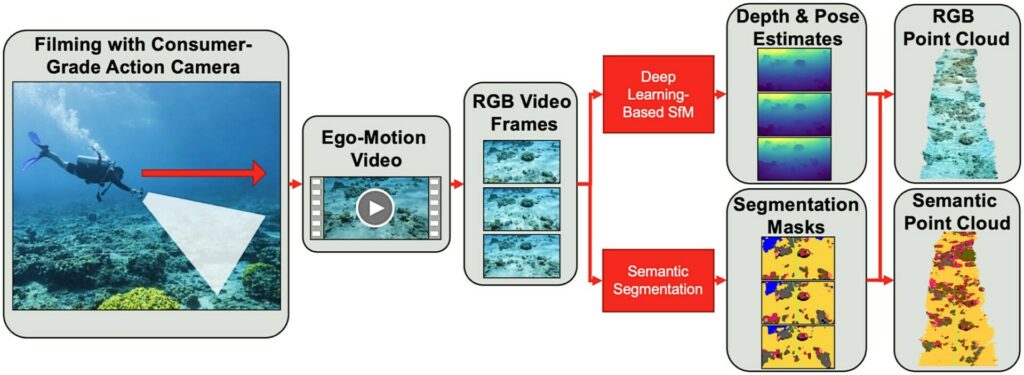

An artificial intelligence system developed at EPFL, the public research university in Lausanne, Switzerland, is claimed to be able to produce detailed 3D maps of coral reefs even from amateur divers’ questionably lit video footage – in a matter of minutes.

The data required for the DeepReefMap system can be collected by anyone equipped with standard diving gear and a commercially available camera.

All they have to do is swim slowly above a reef for several hundred metres, capturing video footage of the view below as they go.

The only limits are the camera’s battery life and the amount of air in the diver’s tank, says EPFL, claiming that the development marks “a major leap forward in deep-sea exploration and conservation capabilities for organisations such as the Transnational Red Sea Centre (TRSC)” – a scientific research body that has been hosted by EPFL since 2019.

The TRSC has been carrying out in-depth studies on those Red Sea coral species that have proven most resistant to climate-related stress, with its initiative also serving as a testing ground for the DeepReefMap system.

Maps in moments

Developed at the Environmental Computational Science & Earth Observation Laboratory (ECEO) within EPFL’s School of Architecture, Civil & Environmental Engineering (ENAC), DeepReefMap is said to have the power to produce several hundred metres of 3D reef maps in moments.

Not only that, but it can also recognise the distinctive features and characteristics of the corals and classify them

“With this new system, anyone can play a part in mapping the world’s coral reefs,” says TRSC projects co-ordinator Samuel Gardaz. “It will really spur on research in this field by reducing the workload, the amount of equipment and logistics, and the IT-related costs.”

Obtaining 3D coral-reef maps using conventional methods has proven challenging and expensive in the past, says EPFL.

Computationally intensive reconstructions are based on several hundred images of the same portion of reef of very limited size (a few dozen metres) taken from many different reference points, and only specialist divers have been able to obtain such images.

These factors have severely limited coral-reef plotting in parts of the world lacking the necessary technical expertise, and have discouraged the monitoring of extensive reefs covering kilometres, or even hundreds of metres.

Six-camera array

While data on small reefs can be captured easily for DeepReefMap by amateur divers, to obtain data over a wider area the EPFL researchers have developed a PVC structure that holds six cameras – three facing forward and three back. The cameras are spaced 1m apart and the set-up is still operated by a single diver.

This six-camera array is said to offer a low-cost option for local dive-teams operating on limited budgets.

Once the footage is uploaded, DeepReefMap is said to have no problem with poor lighting or the diffraction and caustic effects often found in underwater images.

“Deep neural networks learn to adapt to these conditions, which are suboptimal for computer vision algorithms”.

Existing 3D mapping programs work reliably only under precise lighting conditions and with high-resolution images, and are “also limited when it comes to scale”, according to ECEO professor Devis Tuia.

“At a resolution where individual corals can be identified, the biggest 3D maps are several metres in length, which requires an enormous amount of processing time,” he says. “With DeepReefMap, we’re restricted only by how long the diver can stay under water.”

Health and shape

The researchers also claim to have made life easier for field biologists by including “semantic segmentation algorithms” that can classify and quantify corals according to two characteristics.

The first characteristic is health – from highly colourful (suggesting good health) to white (indicative of bleaching) and covered in algae (denoting death) – and the second is shape, using an internationally recognised scale to classify the types of corals most commonly found in the shallow reefs of the Red Sea (branching, boulder, plate and soft).

“Our aim was to develop a system that would prove useful to scientists working in the field and that could be rolled out quickly and widely,” says Jonathan Sauder, who worked on the development of DeepReefMap for his PhD thesis.

“Djibouti, for instance, has 400km of coastline. Our method doesn’t require any expensive hardware. All it takes is a computer with a basic graphics processing unit. The semantic segmentation and 3D reconstruction happen at the same speed as the video playback.”

The researchers believe that using the technology it will become easy to monitor how reefs change over time, to identify priority conservation areas.

It will also give scientists a starting point for adding other data such as diversity and richness of reef species, population genetics, adaptive potential of corals to warmer waters, and local pollution in reefs. This process could eventually lead to the creation of a full digital twin of a reef.

DeepReefMap could also be used in mangroves and other shallow-water habitats, and serve as a guide in the exploration of deeper marine ecosystems, says EPFL.

“The reconstruction capability built into our AI system could easily be employed in other settings, although it’ll take time to train the neural networks to classify species in new environments,” says Tuia.

Shipwreck-mapping?

“I don’t expect commercial use (both in the sense of use in commercial diving, as well as selling a product) soon,” Jonathan Sauder told Divernet. “The method will most likely stay under development, with more user-friendly open-source releases coming soon.

“3D vision is a hot field in machine learning / robotics research. Things are moving extremely fast and I expect real-time mapping to have its ‘ChatGPT moment’ within the next years, with a sudden widespread availability of very strong algorithms, driven by large companies with seemingly infinite research and engineering budgets, but we’ll see!”

Could the system be adapted for 3D mapping of shipwrecks? “The 3D mapping is a learned algorithm – meaning that it learns from a set of training videos.

n our scenario, we train the mapping system on reef videos. I suspect right now it would work OK-ish on shipwrecks, but could work a lot better if trained on large amounts of videos from such scenes.

“For now, I would expect the best method for getting cool 3D reconstructions of shipwrecks to still be a conventional 3D mapping workflow of taking many high-res photos, calculating the camera poses with a Structure-from-Motion software such as Agisoft Metashape or COLMAP, and then potentially rendering these nicely as a Gaussian Splat.”

A paper on the reef-mapping research was recently published in the journal Methods In Ecology And Evolution.

Also on Divernet: The world’s coral reefs are bigger than we thought…, 10 ways tech is rescuing coral, Deep coral reef is world’s biggest known, 18th-century charts reveal coral loss